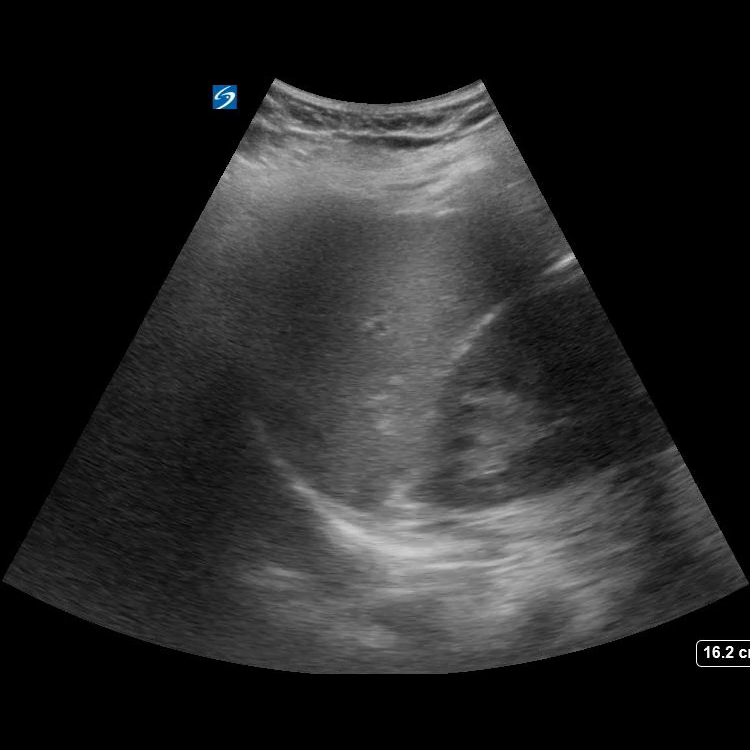

We developed an AI model for detection of ultrasound image adequacy and positivity for FAST exam (Focused Assessment with Sonography in Trauma [1]). The results are accepted for publication in Journal of Trauma and Acute Care Surgery.

We deployed the model (based on Densenet-121 [2]) on an edge device (Nvidia Jetson TX2 [3]) with faster-than-realtime performance (on a video, 19 fps versus expected 15 fps from an ultrasound device) using TensorRT [4] performance optimizations. The model is trained to recognize adequate views of LUQ/RUQ (Left/Right Upper Quadrant) and positive views of trauma. The video below demonstrates the model prediction for the adequacy of the view.

The device can be used as a training tool for inexperienced Ultrasound operators to aid them in obtaining better (adequate) views and suggest probability of positive FAST test.

The project is done in collaboration with University of Kentucky Department of Surgery. The annotated data is provided by Brittany E Levy and Jennifer T Castle.

[1] https://www.ncbi.nlm.nih.gov/books/NBK470479/

[2] Huang G, Liu Z, van der Maaten L, Weinberger KQ. Densely Connected Convolutional Networks. Proceedings – 30th IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2017. 2016;2017-January:2261-2269. DOI: 10.48550/arxiv.1608.06993

[3] https://developer.nvidia.com/embedded/jetson-tx2

[4] https://developer.nvidia.com/tensorrt